Ranking Engine 2252143974 Digital System

The Ranking Engine 2252143974 Digital System orchestrates data into prioritized outcomes with edge efficiency. It emphasizes deterministic governance, scalable decisions, and auditable pathways. Its architecture minimizes latency through parallel processing and local storage while converting noise into actionable metrics. Real-world use cases hinge on rapid risk scoring, compliant automation, and transparent AI governance. The framework invites scrutiny of trade-offs and integration challenges, prompting further inquiry into how its stack aligns with existing systems and governance standards.

What the Ranking Engine 2252143974 Digital System Solves

The Ranking Engine 2252143974 Digital System addresses the core challenge of transforming disparate data into reliable, prioritized outcomes. It identifies insight gaps, aligns signals with objectives, and converts noise into actionable metrics. By addressing edge latency and data partitioning, it enables scalable responsiveness, disciplined decision-making, and freedom to act swiftly without sacrificing accuracy or accountability.

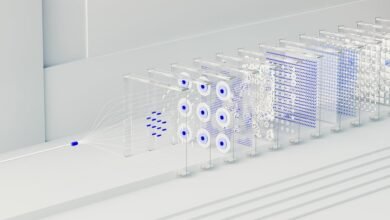

How Its Architecture Delivers Edge-Ready Performance

How does the architecture enable edge-ready performance? The design emphasizes modular components and deterministic data paths, reducing variability and ensuring predictable timing. Edge latency is minimized through parallelized processing and localized storage, while hierarchical caching accelerates frequent queries. Scalable indexing adapts to workload growth without compromising throughput, delivering responsive decisioning. The approach balances autonomy with centralized governance for reliable freedom in deployment.

Real-World Use Cases: From Data Streams to Decisioning

Edge-anchored analytics translate continuous data streams into timely, automated decisions by leveraging deterministic processing and localized storage. Real-world deployments demonstrate rapid risk scoring, personalized routing, and compliant automation across sectors. The approach supports AI governance and clear latency budgeting, enabling autonomous systems to act with transparency while preserving user freedom and scalability. disciplined, proactive evaluation informs ongoing optimization and governance alignment.

Practical Implementation and Trade-Offs for Your Stack

Practical implementation hinges on aligning architecture, data governance, and latency budgets to concrete business outcomes. In practice, teams balance fragmented indexing with disciplined data lineage, measuring impact across compute, storage, and retrieval paths. Trade-offs emerge between freshness and cost, requiring explicit latency budgeting.

The result is a scalable, auditable stack that preserves freedom of experimentation while enforcing governance and predictable performance.

Conclusion

The Ranking Engine 2252143974 Digital System? A paragon of edge calm, converting noise into metrics with machine-like humility. Of course it promises auditable governance and latency budgets, because who wouldn’t want a system that dutifully prioritizes risk scores while quietly storing data at the edge? Irony aside, its disciplined architecture delivers proactive outcomes, turning disparate streams into deterministic decisions—though the real triumph lies in proving that complexity can be made almost perfectly inconspicuous.